Jacob Seifert, Ph.D.

Postdoctoral researcher developing algorithms at the intersection of computational imaging, optics, and machine learning.

Weblinks to my projects and publications

Check out my work on: Google Scholar | YouTube | GitHub | LinkedIn | Email

More Content

Previous Projects

Auto-Differentiable Ptychography

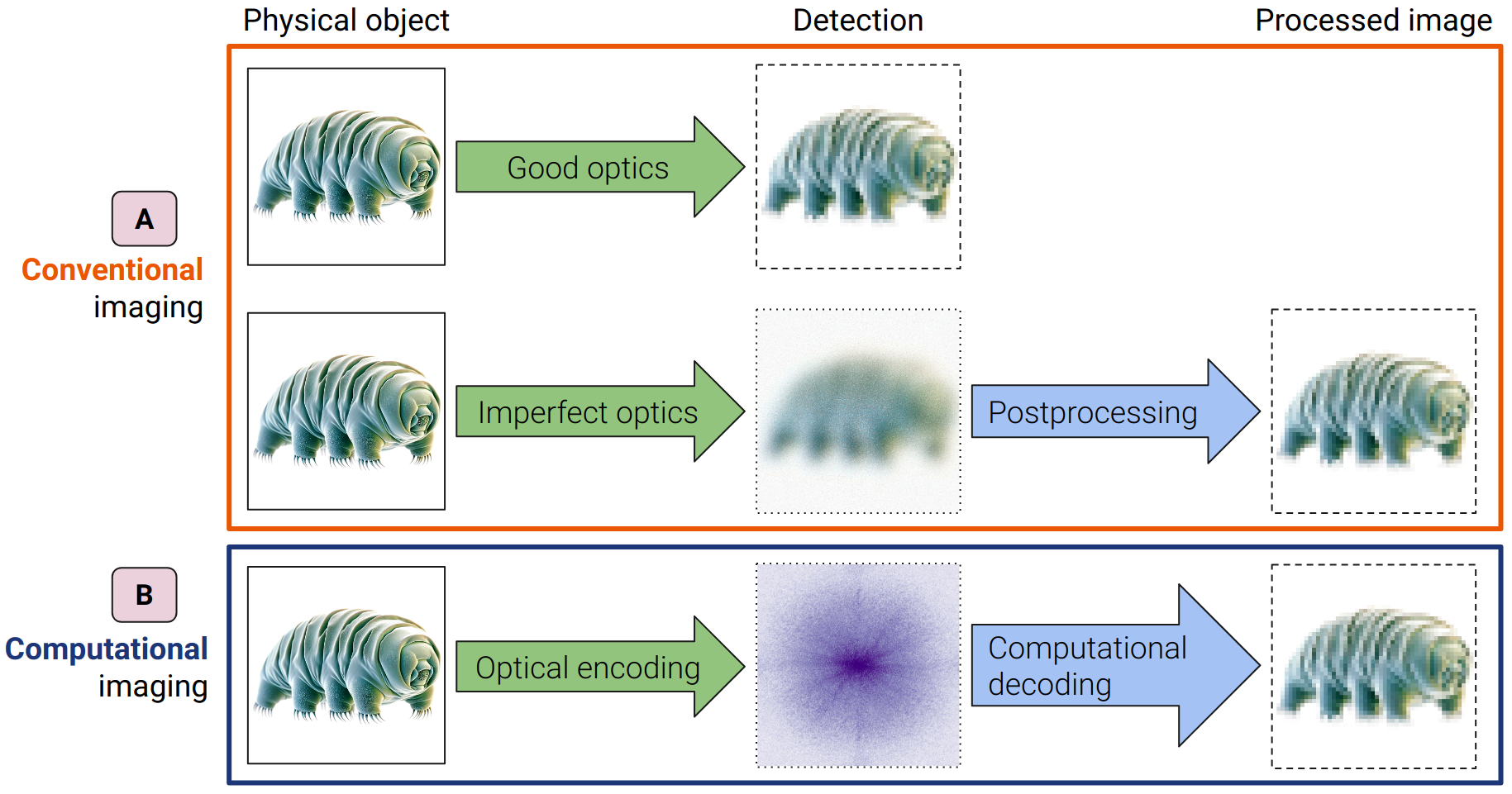

Computational imaging is a novel imaging paradigm that aims to overcome the limitations of traditional imaging systems. Instead of forming a perfect image on a sensor, we measure some derived data (e.g. diffraction patterns) and use a computer to reconstruct the image. This allows us to build simpler imaging systems and to reconstruct information that would otherwise be lost.

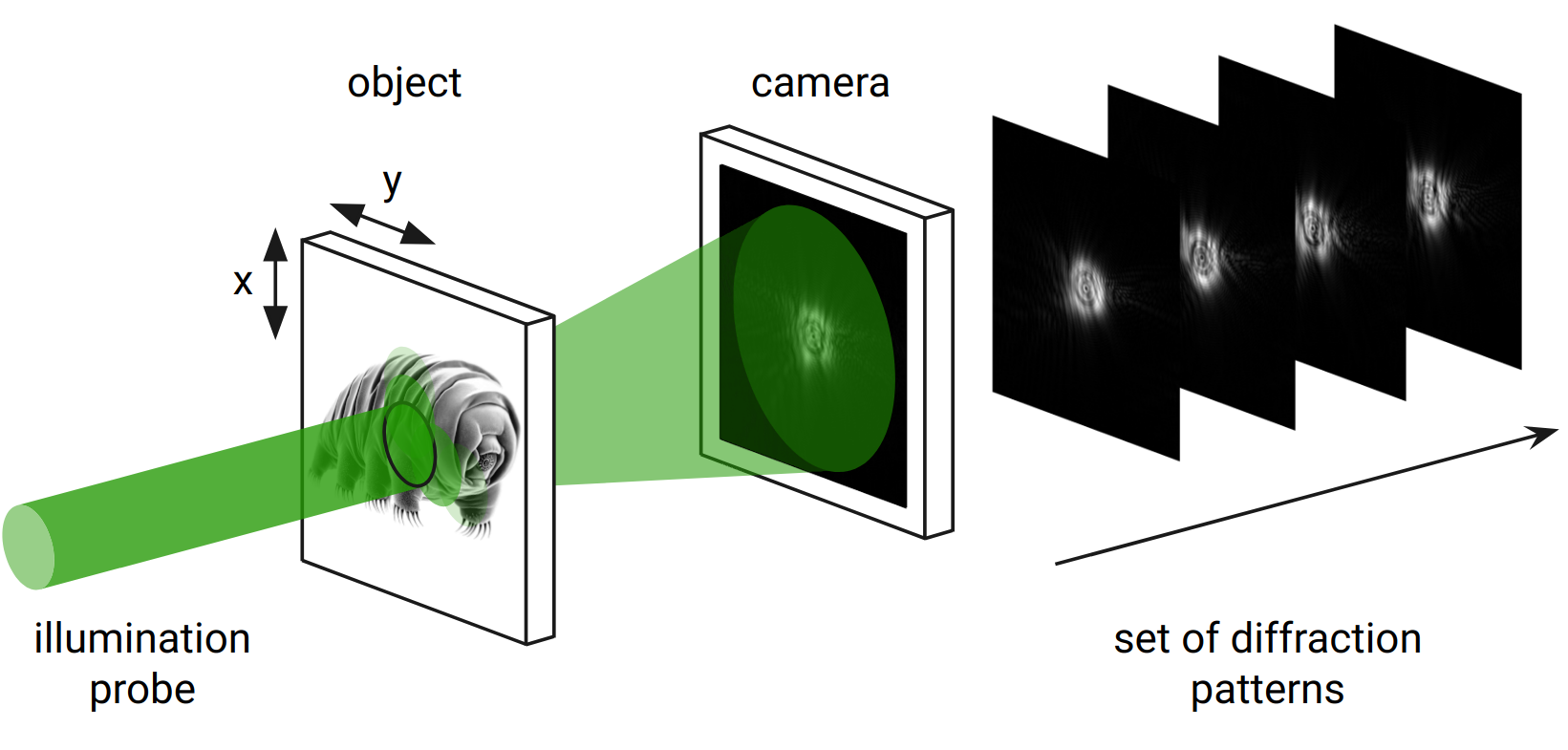

Ptychography is a computational imaging method that uses a series of diffraction patterns to reconstruct the image of a sample. A localized illumination is scanned across a sample, and for each position, a diffraction pattern is recorded. These diffraction patterns are then used to reconstruct the complex-valued transmission function of the sample.

My Ph.D. work in this field has focused on making ptychography more robust and accessible. Here are some selected contributions:

-

Open-source AD ptychography: We developed an open-source ptychography framework based on automatic differentiation (AD) and TensorFlow. This makes it easier for researchers to develop and test new reconstruction algorithms. This work was published in OSA Continuum.

-

Fine-tuning for challenging noise conditions: We developed a method to account for the mixed Poisson-Gaussian noise statistics that are often present in experimental data. This allows for better image reconstruction quality, especially in low signal-to-noise ratio conditions. This work was published in Optics Letters and is also open-source.

-

Semiconductor application and metrology: We demonstrated the use of ptychography for the metrology of semiconductor nanostructures. We developed a wavelength-multiplexed reconstruction algorithm that can handle the instabilities of the EUV sources used in this application. This work was published in Light: Science & Applications.

-

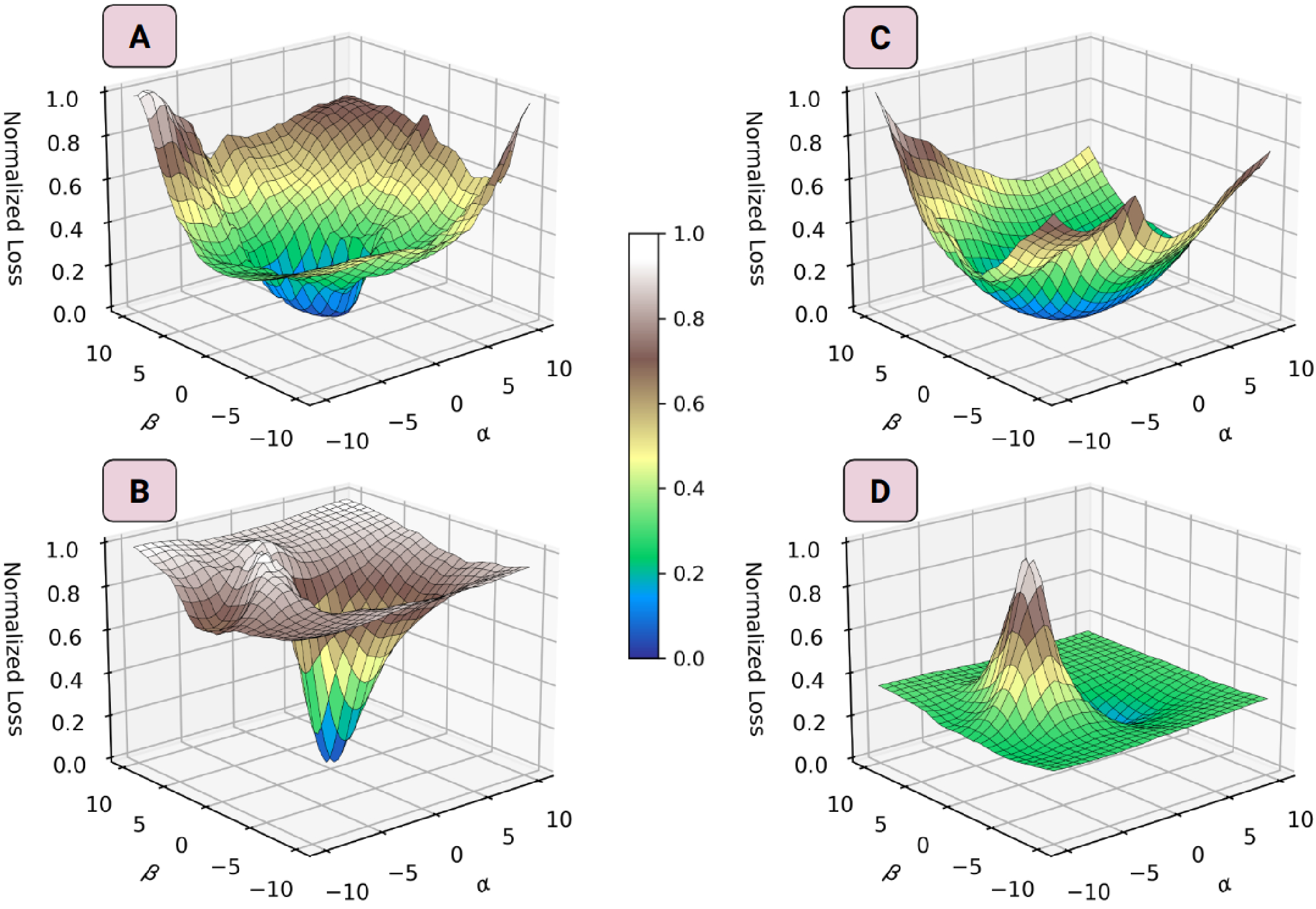

Deep generative models for ptychography: Natural images are often sparse in a latent space. We can visualize this with a latent space walk, which shows smooth transitions within an object class. Implicit rank-minimized autoencoders can be used for this.

Then, we use this representation in a lower dimension for noise robustness. What I found cool is that we can now approximately visualize the loss landscape of this usually high-dimensional optimization problem (millions of parameters) to study the convexity properties in different noise conditions. This work was published in Optics Express.

Maker Space in Utrecht University

In 2022, with a small team of staff lead from physics/biology/informatics, we set up the first maker space for digital fabrication at Utrecht University: Lili’s Proto Lab. It was a lot of fun working towards this grand opening, and I documented the first wave of (student) projects in the yearly report of 2022.